For the owner or developer of a lean website—characterized by minimal pages, focused content, and streamlined code—encountering a Google Search Console Coverage report can be a puzzling experience.The report, designed to catalog every URL Google discovers, often presents a tableau that seems to contradict the very leanness of the site.

The Unsexy Power of a Manual XML Sitemap

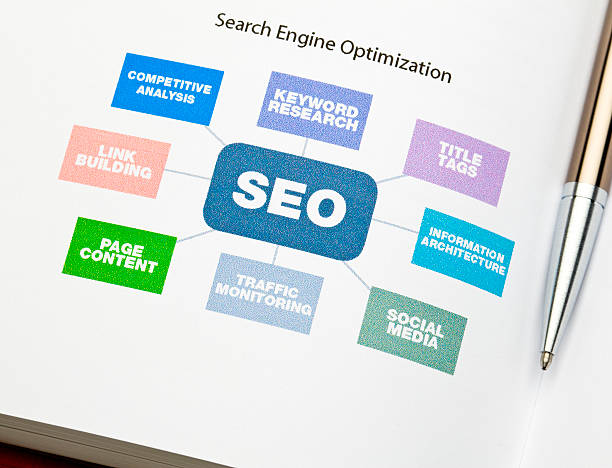

Forget the complex jargon and expensive tools for a moment. In the world of do-it-yourself SEO, one of the most powerful, low-cost technical moves you can make is manually creating and submitting an XML sitemap. This isn’t about fancy plugins or automated services that promise the moon. This is about taking direct, unambiguous control of how you tell search engines about your website’s most important pages. For startup marketers watching every penny, mastering this simple file is a fundamental hack that delivers disproportionate results.

An XML sitemap is, at its core, a straightforward list. It is a file written in a code language that search engines understand, explicitly listing the URLs you want them to know about. Think of it as a prioritized guest list you hand to the bouncer at the club, ensuring your most important friends get inside. Your website might have dozens or hundreds of pages, but search engine crawlers can miss some, especially if your site is new, has limited internal links, or contains pages that aren’t well-connected. A manual sitemap cuts through this uncertainty. You are not leaving discovery to chance; you are providing a direct, canonical roadmap.

Creating this file manually is simpler than it sounds. You start with a plain text editor, like Notepad or a free code editor. The structure is rigid but easy to follow. You open with a declaration that defines it as an XML file, then wrap your entire list of URLs in opening and closing sitemap tags. Each individual page gets its own set of URL tags. Within those, you specify the exact location of the page, the last time it was modified, how important it is relative to other pages on your site, and how often it typically changes. This lastmod, priority, and changefreq data are strong signals, not hard commands, but they guide crawlers efficiently. You must be meticulous with your syntax—a single missing slash can break the file—but the process forces a valuable audit of your site’s key content.

Once your sitemap is built, save it as “sitemap.xml” and upload it to the root directory of your website, the same main folder where your homepage lives. The job is only half done at this point. Creation is pointless without submission. This is where you move from talking to yourself to having a conversation with the search engines. For Google, you use Google Search Console. Create a property for your site, verify ownership through one of their simple methods, navigate to the Sitemaps section, and submit the full URL of your sitemap file. For Bing, the process is nearly identical using Bing Webmaster Tools. This act of submission is your formal ping, a direct line saying, “Here is my updated roadmap, please use it.“

The true hack here is not just in the action but in the mindset it builds. Manually crafting your sitemap forces you to critically evaluate your site’s structure. You must decide which pages are truly essential, which are your priority, and which might be forgotten. It highlights orphaned content and clarifies your site’s hierarchy. This hands-on process provides a level of understanding and control that automated generators simply cannot match. You are not relying on a tool’s interpretation; you are enforcing your own SEO strategy at the most basic, technical level. For the startup marketer, this direct control is a low-cost, high-impact foundation. It ensures your most valuable content, the pages you’ve invested in, are on the radar of the very algorithms that can make or break your early growth. Do not outsource this fundamental understanding. Build the map yourself, submit it yourself, and own that piece of your technical SEO foundation.