In the intricate chess game of search engine optimization, a competitor’s backlink profile is not merely a list of URLs; it is a treasure map to their authority, revealing the strategic partnerships, content victories, and digital relationships that fuel their rankings.To reverse engineer this profile strategically is to move beyond simple imitation and toward intelligent, sustainable link acquisition.

The Art of Structure: Organizing Reverse Engineering Findings for Clarity and Impact

The process of reverse engineering is a meticulous dance between discovery and deduction, where the final understanding of a system is painstakingly assembled from fragments of observed behavior and structure. However, the true value of this intellectual endeavor is not realized in the moment of insight alone, but in the ability to communicate, reference, and build upon those insights. Consequently, the best way to organize findings is not a mere clerical task, but a strategic methodology that mirrors the investigative process itself, evolving from raw observation into a coherent, layered narrative. The most effective approach is a living, hierarchical documentation system that begins with chronological raw data and culminates in synthesized, actionable knowledge.

The foundation of any robust organization scheme is the immutable laboratory notebook. This initial layer should capture every observation, hypothesis, and test in a strict chronological log, complete with timestamps. These are the raw, unadulterated facts: memory dumps, packet captures, disassembly snippets, unexpected outputs, and even failed experiments. The purpose here is fidelity and context, ensuring that no detail is lost and that the sequence of discovery is preserved. This log serves as the ultimate source of truth, a bedrock of data from which all higher-order understanding is derived. Modern tools may digitize this as a searchable repository of files, screenshots, and notes, but the principle remains—this layer is for capturing, not yet for interpreting.

As patterns emerge from the chaos of raw data, the organization must facilitate synthesis. This is where a thematic, hierarchical structure comes to the fore. Findings should be grouped by logical components of the target system—such as authentication routines, communication protocols, file formats, or specific modules—rather than by the date they were discovered. Within each component, the documentation should follow a natural flow, often moving from the external interface inward. For instance, document the observed network API calls before detailing the internal function that parses them. This layer transforms chronological notes into structured knowledge, often taking the form of detailed reports, annotated code, or diagrams that explain relationships and control flow.

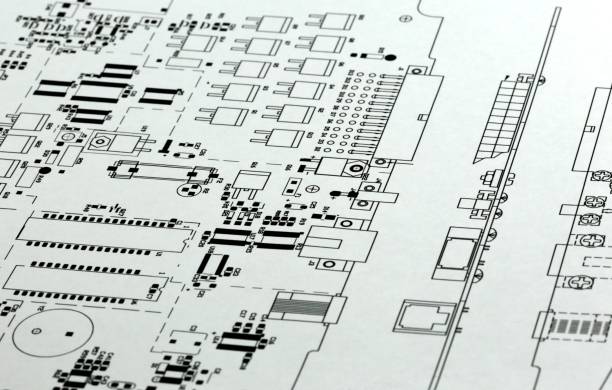

Crucially, the crown of this organized effort is the executive summary and the high-level architectural diagram. After the deep, technical details have been cataloged, one must step back and construct a clear, overarching narrative. This synthesis answers the fundamental “what” and “why”: what is the system’s overall design and purpose, and why does it behave the way it does? A well-crafted diagram that maps the major components and their interactions is worth thousands of disassembled lines of code. This top-level view makes the findings accessible, not only to the engineer who did the work but to stakeholders, colleagues, or your future self who may need a quick refresher without delving into the granular details of a specific function’s stack frame.

Ultimately, the best organization system is both a mirror and a map. It mirrors the analytical journey from effect back to cause, and it maps the recovered territory of the target system in a way that is navigable for various purposes. Whether the goal is vulnerability disclosure, interoperability, security hardening, or simply learning, structured findings prevent the critical insight from being buried in a avalanche of notes. By layering documentation—from the raw chronological log, through component-based analysis, to the synthesized high-level overview—the reverse engineer builds not just an understanding, but a transferable and enduring body of knowledge. This disciplined approach ensures that the intellectual capital gained from countless hours of analysis is preserved, clear, and ready to inform the next challenge.