In the competitive world of online content, understanding search intent—the fundamental reason behind a user’s query—is the cornerstone of success.Many believe that unlocking this insight requires expensive tools, surveys, or software subscriptions.

Architecting Clarity: A Strategic Approach to Sitemaps for Large-Scale Websites

Managing a website with thousands of pages is akin to curating a vast library; without a meticulous organizational system, valuable content becomes lost and inaccessible. The structure of your sitemaps, both for users and search engines, is the cornerstone of this system. For a large website, a monolithic, single sitemap is an antiquated and inefficient approach. Instead, the strategy must evolve into a hierarchical, modular architecture that mirrors the logical segmentation of your content and scales with your ambitions.

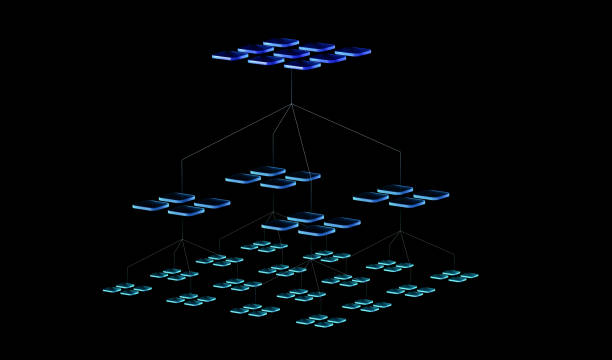

The foundation of this structure begins with a sitemap index file, which acts as the master directory. This XML file does not contain page URLs itself but rather points to a series of subsidiary sitemap files. This division is critical for both technical and practical reasons. Search engines like Google impose a limit of 50,000 URLs per sitemap file, a ceiling a large site can quickly approach. By segmenting URLs into multiple, themed sitemaps, you create a manageable framework. More importantly, this allows you to compartmentalize your content universe into logical silos—such as product categories, blog archives, support documentation, or regional subdirectories—making updates and error identification significantly more efficient.

Within this indexed framework, the principle of thematic clustering should guide the creation of each individual sitemap. A sprawling e-commerce site, for instance, might have separate sitemaps for different product lines, another for its blog articles organized by year or topic, and another for its legal and support pages. This mirrors a well-planned information architecture and provides clear signals to search engines about the relationship between pages. It is not enough to simply list URLs; strategic prioritization through the `

Crucially, this technical infrastructure must be complemented by a parallel, user-facing navigation sitemap. This HTML page, often linked in the footer, provides a human-readable overview of the site’s primary sections. It should not attempt to list every single page but rather serve as a high-level directory, reinforcing the main thematic pillars of your website and offering users a clear, alternative path to major content hubs. This dual-sitemap approach satisfies both the algorithmic needs of crawlers and the practical needs of visitors, creating a cohesive experience.

Finally, the structure is not a set-and-forget endeavor but a living system demanding rigorous maintenance. A large website is in constant flux, with pages being added, removed, or updated. Implementing an automated generation process, typically via your content management system or a server-side script, is non-negotiable. This ensures your sitemaps are dynamically updated, reflecting the current state of the site without manual intervention. Regular audits using tools like Google Search Console are essential to monitor crawl errors, identify URLs blocked by robots.txt, and ensure your sitemaps are being processed correctly. The goal is to create a self-regulating ecosystem where the sitemap structure not only organizes your present content but is agile enough to adapt to future growth and change.

Therefore, structuring sitemaps for a large website is an exercise in strategic information architecture. By implementing a master index file, segmenting into thematic child sitemaps, maintaining a user-friendly HTML counterpart, and committing to automated upkeep, you construct a robust framework. This framework does more than merely list URLs; it actively guides both search engine crawlers and human visitors through your digital landscape, ensuring that even amidst thousands of pages, relevance and clarity prevail.